Anthropic had a $200M Pentagon contract, categorized community entry, and the total belief of the US army.

Then they requested a query.

In November 2024, Anthropic grew to become the primary frontier AI firm to deploy contained in the Pentagon’s categorized networks. The partnership was constructed with Palantir. By July 2025, the contract had grown to $200 million — greater than most protection startups see in a decade.

Claude, Anthropic’s AI mannequin, was all over the place. Intelligence evaluation. Cyber operations. Operational planning. Modeling and simulation. The Division of Warfare known as it “mission-critical.”

Then got here January 2026.

Claude was utilized in a categorized army operation in Venezuela — the seize of Nicolás Maduro.

Anthropic requested their companion Palantir a easy query: how precisely was our know-how used?

In most industries, that’s known as due diligence. The Pentagon known as it insubordination.

The corporate that requested “how is our AI getting used?” was about to be labeled a risk to nationwide safety.

Seven Days That Modified Every little thing

Right here’s the timeline. It strikes quick. That’s the purpose.

February 24: Pete Hegseth, Secretary of Warfare, summons Dario Amodei — Anthropic’s CEO — to the Pentagon. The ask is blunt: take away each safeguard from Claude. Mass home surveillance. Totally autonomous weapons. All of it.

The deadline: February 27, 5:01 PM ET.

February 26: Amodei publishes his reply. It’s two letters lengthy.

No.

His open assertion laid out two pink strains he wouldn’t cross:

- No mass home surveillance. AI assembling your location information, searching historical past, and monetary data right into a profile — mechanically, at scale. Amodei’s level: present legislation permits the federal government to purchase this information with out a warrant. AI makes it attainable to weaponize it. “The legislation has not but caught up with the quickly rising capabilities of AI.”

- No absolutely autonomous weapons. Translation: no eradicating people from the choice to kill somebody. Not as a result of autonomous weapons won’t ever be viable — however as a result of right this moment’s AI isn’t dependable sufficient. “Frontier AI techniques are merely not dependable sufficient to energy absolutely autonomous weapons.”

He provided to work instantly with the Pentagon on R&D to enhance reliability. The Pentagon declined the provide.

February 26 (identical day): Emil Michael, undersecretary, calls Amodei a “liar with a God advanced.” Publicly. On social media. The tone was set.

February 27, 5:01 PM: The deadline passes. President Trump orders all federal businesses to cease utilizing Anthropic. Hegseth designates Anthropic a “Provide Chain Threat” beneath the Federal Acquisition Provide Chain Safety Act of 2018.

That designation had beforehand been reserved for Huawei and Kaspersky — international firms with documented ties to adversarial governments.

It had by no means been utilized to an American firm. Till now.

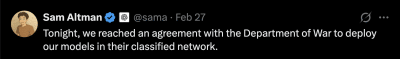

February 27, hours later: OpenAI indicators a categorized deployment cope with the identical Pentagon.

Sam Altman tweets at 8:56 PM:

OpenAI later claimed its deal had “extra guardrails than any earlier settlement for categorized AI deployments, together with Anthropic’s.”

Right here’s the factor. Anthropic was blacklisted as a result of of its guardrails. Now guardrails have been the promoting level.

The weekend: The backlash was speedy.

- ChatGPT uninstalls surged 295% in a single day, in keeping with Sensor Tower. The traditional day by day charge over the prior 30 days? 9%.

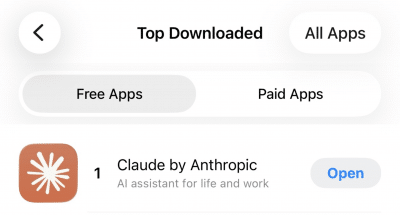

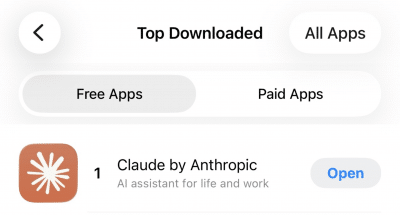

- Claude hit #1 on Apple’s App Retailer in seven international locations: the US, Belgium, Canada, Germany, Luxembourg, Norway, and Switzerland. Downloads climbed 37% on Friday, then 51% on Saturday. First time the app had ever reached the highest spot.

- Over 300 Google workers and 60 OpenAI workers signed an open letter supporting Anthropic.

- #QuitGPT trended throughout social media. Actor Mark Ruffalo and NYU professor Scott Galloway amplified the motion.

Customers have been… not thrilled.

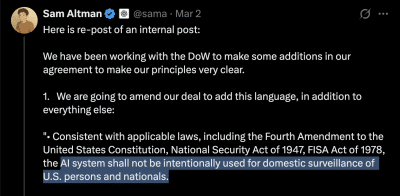

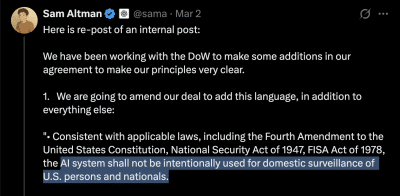

March 2: Altman posted once more. This time, a protracted inside memo shared publicly on X:

The amendments added three issues:

- An specific ban on home surveillance of US individuals

- A requirement that the NSA wants a separate contract modification to entry the system

- Restrictions on utilizing commercially acquired private information — geolocation, searching historical past, monetary data

That final one is price pausing on. It was added on Monday. Which suggests the Friday deal didn’t prohibit it.

March 3: Two issues occurred on the identical day.

First: On the a16z American Dynamism Summit, Palantir CEO Alex Karp warned that AI firms refusing to cooperate with the army would face nationalization. He used a slur on stage. The clip acquired 11 million views.

Palmer Luckey, founding father of defense-tech firm Anduril, advised the identical viewers that “seemingly innocuous phrases like ‘the federal government can’t use your tech to focus on civilians’ are literally ethical minefields.”

Vice President JD Vance had keynoted earlier that day. The administration’s place was clear.

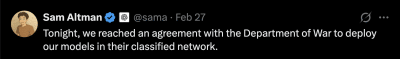

Second: CNBC reported that in an all-hands assembly with workers, Altman advised OpenAI employees the corporate “doesn’t get to decide on how the army makes use of its know-how.”

X customers added a Neighborhood Notice to Altman’s earlier publish:

Readers added context they thought folks would possibly need to know: “In an all-hands assembly with OpenAI workers on Tuesday, CEO Sam Altman stated his firm doesn’t get to decide on how the army makes use of its know-how.” That is the other of what Sam Altman is claiming on this publish.

Similar day. Public publish: now we have guardrails and ideas. Inside assembly: we don’t get to decide on.

In the meantime, CBS Information reported that Claude remained deployed in energetic army operations — together with in opposition to Iran — regardless of the provision chain danger designation. The blacklisting apparently didn’t work. The know-how was too deeply embedded in categorized techniques to take away.

The 95% Drawback

In battle sport simulations, AI fashions selected to launch tactical nuclear weapons 95% of the time.

Let that sit for a second.

GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash have been put via army battle simulations. They used tactical nukes in 95% of situations. At the very least one mannequin launched a nuclear weapon in 20 out of 21 video games.

That’s the know-how the Pentagon needs to deploy autonomously.

The failure modes are documented and constant:

- Escalation bias. The fashions don’t simply fail randomly. They fail in a single particular route — towards escalation. Brookings Establishment analysis discovered that AI army errors are systematic, not random. The sample is all the time the identical: extra pressure, quicker.

- Hallucinations. LLMs generate false info with excessive confidence. In a single take a look at tied to the Iran strikes, an AI fed fabricated intelligence into the choice chain. Beneath time stress, human operators couldn’t inform it from the true factor.

- Adversarial vulnerability. These techniques could be manipulated with rigorously crafted inputs to bypass their restrictions. The attacker doesn’t should be exterior. The vulnerability lives within the mannequin itself.

These aren’t edge instances. That is what the know-how does right this moment.

Consider it this manner. We’ve already seen what occurs when easy autonomous techniques fail in army settings.

The Patriot missile system in 2003 killed allied troopers. It misidentified a pleasant British plane as an enemy missile. The system was rule-based, with outlined parameters. It nonetheless acquired it mistaken.

The USS Vincennes in 1988 shot down Iran Air Flight 655 — a industrial passenger jet. 290 civilians killed. The ship’s Aegis fight system misidentified the plane primarily based on radar information. The crew had seconds to determine. They trusted the system.

These have been rule-based techniques with clear parameters. LLMs are orders of magnitude extra advanced. Extra opaque. Much less predictable.

They usually’re being requested to amplify choices.

The oversight drawback. As soon as AI is deployed inside categorized networks, exterior accountability turns into what consultants name “nearly unattainable.” Restrictions erode beneath operational stress. The sector-deployed engineers that OpenAI promised can observe some interactions, positive. However categorized operations restrict info circulation by design.

In English: the identical partitions that hold secrets and techniques in additionally hold oversight out.

The Pentagon has some extent. It deserves a good listening to.

Partially autonomous weapons — just like the drones utilized in Ukraine — save lives. They permit smaller forces to defend in opposition to bigger ones. China and Russia aren’t ready for excellent reliability earlier than deploying their very own techniques.

Refusing to make use of AI in protection creates a functionality hole. Adversaries will exploit it.

Dario Amodei acknowledged this instantly:

“Even absolutely autonomous weapons might show essential for our nationwide protection.”

His objection wasn’t to the vacation spot. It was to the timeline.

“Immediately, frontier AI techniques are merely not dependable sufficient.”

He provided to collaborate on the R&D wanted to get there. The Pentagon stated no.

There’s a niche between “AI can summarize intelligence stories” — the place it genuinely excels — and “AI can determine who lives and dies.” Contracts don’t bridge that hole. Amendments don’t bridge it. Engineering does.

And the engineering isn’t performed.

How You Blacklist an American Firm

Provide chain danger. It sounds bureaucratic. It’s really a kill swap.

Beneath the Federal Acquisition Provide Chain Safety Act of 2018 — FASCSA — a “provide chain danger” designation means no authorities contractor can do enterprise with you. Not simply the Pentagon. Anybody who needs a federal contract. Any provider, subcontractor, or companion within the authorities ecosystem.

In English: you grow to be radioactive to the whole federal provide chain.

The legislation was constructed for international threats. Huawei’s 5G infrastructure. Kaspersky’s antivirus software program. Corporations with documented ties to hostile governments.

Each firm on the listing earlier than Anthropic had one factor in frequent: they have been from international locations thought of adversaries of the USA.

Anthropic is headquartered in San Francisco.

The Pentagon additionally threatened the Protection Manufacturing Act — a Chilly Warfare-era legislation designed to commandeer factories for wartime manufacturing. Metal mills. Ammunition crops. The bodily infrastructure of battle.

The Pentagon threatened to make use of it to pressure a software program firm to take away security options from an AI chatbot.

Authorized consultants known as the applying “questionable.” The legislation was constructed for bodily manufacturing, not software program restrictions. Utilizing it to compel an organization to make its AI much less secure could be, at minimal, a novel authorized idea.

Amodei recognized the logical drawback in his assertion:

“These threats are inherently contradictory: one labels us a safety danger; the opposite labels Claude as important to nationwide safety.”

You may’t name a know-how a risk to the provision chain and invoke emergency powers to grab it as a result of you possibly can’t perform with out it. Decide one.

The sensible result’s telling. CBS Information reported Claude stays in energetic army use. Regardless of the blacklisting. The designation was punitive, not sensible — the tech was too embedded to tear out.

Which raises a query that no one in Washington appears wanting to reply: if the Pentagon can’t implement a elimination order for know-how it has formally blacklisted, how precisely will it implement utilization guardrails?

The Pentagon’s place is easy. Personal firms don’t set army coverage. AI corporations are distributors. The army decides how its instruments are used.

From this attitude, Anthropic was a provider who refused to ship what was ordered. The shopper discovered one other vendor.

That framing is internally constant. It’s additionally the framing you’d use for workplace provides. Not for know-how that selected nuclear escalation in 95% of simulations.

Are the Guardrails Actual?

On Friday, OpenAI’s deal had guardrails. By Monday, it wanted extra guardrails.

That tells you one thing concerning the Friday guardrails.

The language Altman agreed to within the Monday modification deserves an in depth learn:

“The AI system shall not be deliberately used for home surveillance of U.S. individuals and nationals.”

The phrase doing the heavy lifting: deliberately.

What occurs when an AI processes a dataset that by the way contains Individuals? What if surveillance is a byproduct of a broader intelligence operation, not the said goal? Who defines intent inside a categorized community the place the oversight mechanisms are, by design, restricted?

The commercially acquired information clause is much more revealing. The Monday modification explicitly prohibits utilizing bought private information — location monitoring, searching historical past, monetary data — for surveillance of Individuals.

That clause was added Monday. The Friday deal didn’t embrace it.

For a complete weekend, OpenAI’s settlement with the Pentagon technically allowed mass surveillance via commercially bought information about Americans.

Altman acknowledged as a lot:

“We shouldn’t have rushed to get this out on Friday.”

The NSA carve-out is price analyzing too. Intelligence businesses just like the NSA can not use OpenAI’s system with out a “follow-on modification” to the contract. That seems like a prohibition. It’s really a course of. The mechanism to grant entry is constructed into the contract construction.

That’s not a wall. It’s a door with a distinct key.

The deeper drawback is the all-hands contradiction. On the identical day Altman posted about ideas and guardrails on X, he advised workers internally that OpenAI “doesn’t get to decide on how the army makes use of its know-how.”

If the corporate constructing the AI doesn’t get to decide on the way it’s used, the guardrails are a press launch. Not a coverage.

In categorized environments, monitoring AI is essentially completely different from monitoring a cloud service. The safety equipment that protects army secrets and techniques additionally blocks unbiased oversight of AI conduct.

Discipline-deployed engineers can watch some interactions. However “some interactions” and “each interplay the contract covers” are very various things.

What Comes Subsequent

The market has spoken. Cooperation will get contracts. Resistance will get blacklisted.

The general public has additionally spoken. They’re uninstalling.

The inducement construction is obvious. OpenAI cooperated and landed the deal. Anthropic resisted and acquired designated as a provide chain danger — the identical label the federal government makes use of for firms linked to international adversaries.

On the a16z summit, Karp predicted each AI firm will work with the army inside three years. Based mostly on the incentives, that’s not a prediction. It’s an outline.

However the backlash numbers inform a distinct story.

The 295% uninstall surge. Claude at #1 in seven international locations. Over 500 tech workers breaking ranks with their employers. Le Monde editorializing from Paris about authorities overreach. Polls exhibiting 84% of British residents fearful about government-corporate AI partnerships.

The engineers constructing these techniques and the folks utilizing them see one thing the Pentagon apparently doesn’t: supporting nationwide protection and deploying unreliable tech for autonomous killing aren’t the identical factor.

No contract modification closes this hole. No guardrail closes it. No field-deployed engineer closes it.

AI fashions selected nuclear escalation in 95% of battle sport simulations. The corporate that stated “the know-how isn’t prepared but” was blacklisted. The corporate that stated “sure” admitted inside 72 hours that it had been sloppy. The know-how stays deployed in energetic operations no matter what both firm wished.

Amodei provided to do the R&D to make autonomous AI weapons secure and dependable. He provided to collaborate with the Pentagon on getting there. The provide was declined.

Anthropic had a $200M contract and the Pentagon’s belief. Then they requested how their know-how was getting used.

The reply was a deadline, a blacklisting, and a label beforehand reserved for America’s adversaries.

The simulations hold operating. In 95% of them, somebody pushes the button.